De-identification before inference

Remove direct identifiers before model calls wherever workflow allows. Keep reversible mapping in a separate secured service.

Nobody cares how accurate your healthcare AI model is if it can't pass a compliance review. That's the reality of building in this space right now.

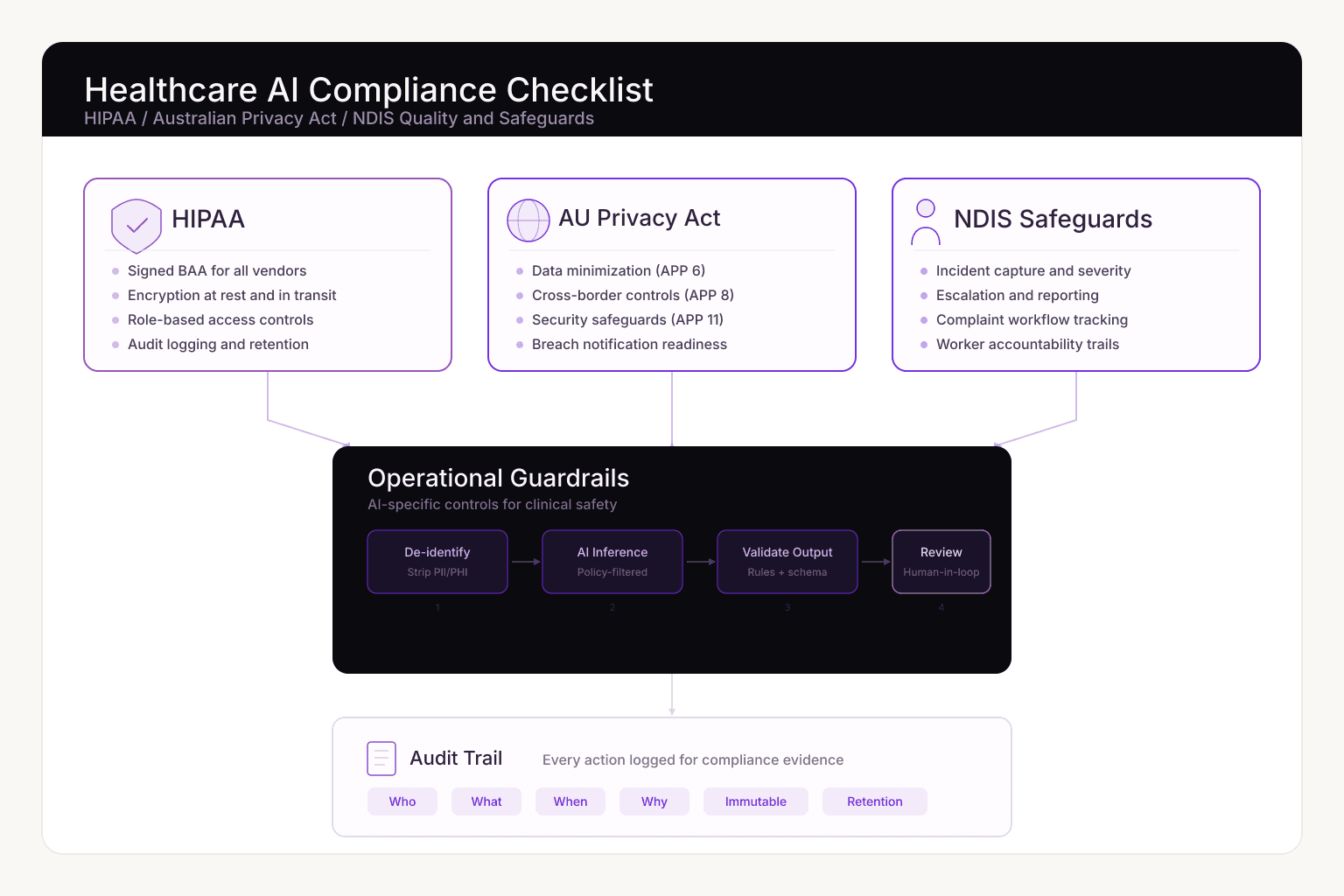

And the review isn't simple. US organizations need HIPAA-aligned controls. Australian teams have to satisfy the Privacy Act and Australian Privacy Principles (APPs). NDIS providers need evidence handling and incident governance on top of that. If your system can't demonstrate these controls clearly, you're carrying real legal and financial risk.

We put this checklist together for product leads and engineering teams who are either building healthcare AI internally or evaluating partners to do it.

We keep seeing the same pattern: teams build the AI features first, then try to bolt on compliance before launch. It rarely works. In healthcare, compliance needs to be baked into the architecture from the start.

Here's why:

The approach that works is treating legal requirements and technical controls as the same thing, and validating both continuously.

Use this as a release gate for healthcare AI systems that process patient or participant-related data.

| Domain | Minimum production-ready requirement | Evidence expected |

|---|---|---|

| HIPAA controls | Signed BAA, encryption at rest/in transit, role-based access | BAA record, key management docs, access matrix |

| AU Privacy Act / APPs | Data minimization, purpose limitation, cross-border controls | Data flow map, retention rules, subprocessors list |

| NDIS safeguards | Incident workflows, complaint tracking, worker accountability | Incident registers, escalation policies, audit logs |

| AI governance | Prompt/data filtering, hallucination controls, human review | Eval reports, approval checkpoints, exception logs |

| Auditability | End-to-end event logging tied to user actions | Immutable logs, retention policy, review process |

A lot of teams think HIPAA compliance is mostly about encryption. It's not. Audits look at the full picture of how your system handles protected data.

If you're serving Australian users, you need controls that map to the Australian Privacy Principles. Here's what that looks like in practice.

NDIS regulators and quality teams want to see that your participant safety controls actually work in practice, not just on paper.

Standard privacy controls aren't enough when you're running AI models against clinical data. You also need to manage the risks that come from the model itself.

Remove direct identifiers before model calls wherever workflow allows. Keep reversible mapping in a separate secured service.

Apply rules, confidence thresholds, and schema validation before outputs are accepted into downstream systems.

Require explicit review for high-impact outputs such as care-plan recommendations, triage suggestions, or incident summaries.

Additional safeguards:

In our experience, compliant healthcare AI systems tend to follow a similar flow:

This isn't the only way to structure it, but the pattern holds up well across different clinical workflows.

Healthcare buyers will request these during vendor review. Missing any of them can stall your launch by weeks, so prepare them early.

Minimum package:

| Phase | Timeline | Priority outcomes |

|---|---|---|

| Foundation | Weeks 1-3 | Data inventory, risk register, baseline control matrix |

| Control implementation | Weeks 4-8 | Access controls, encryption posture, logging pipeline, review gates |

| Validation and readiness | Weeks 9-12 | Audit simulation, incident drill, procurement documentation pack |

Execution principles:

If you're evaluating partners for healthcare AI work, ask them to walk you through:

If you're getting vague answers during early conversations, that's a red flag.

HIPAA-ready AI systems with compliance controls, audit logging, and clinical review workflows.

Explore serviceConnect AI capabilities to your existing systems with production-grade reliability and governance.

Explore serviceMap out all the data your system touches and classify it by sensitivity. Everything else, from control design to legal docs, depends on getting this right first.

Some workflows can process de-identified or minimally sensitive data. High-value clinical workflows still require strict PHI handling controls, auditability, and role-based access.

Both require controlled access, secure handling, and accountable governance. Australian projects add APP-focused obligations, including tighter cross-border disclosure controls.

Because "highly accurate" still means it gets things wrong sometimes, and in healthcare, those edge cases can affect patient safety. Human review for high-impact outputs is as much about accountability as accuracy.

Compliance in healthcare AI isn't a checkbox you tick before launch. It's an architecture decision you make at the start. Teams that build HIPAA, Privacy Act, and NDIS requirements into their system design from day one move through procurement faster and carry less risk.

If you're planning a healthcare AI project this year, use this checklist as a starting point for your internal review or vendor evaluation. It won't cover every edge case in your specific domain, but it'll keep you from missing the big stuff.