Define goals and metrics

Start with a concrete outcome and measurable success targets before selecting tools or models.

Many startups can get an agent demo running in a day. Shipping one that is measurable, safe, and cost-stable is the real work.

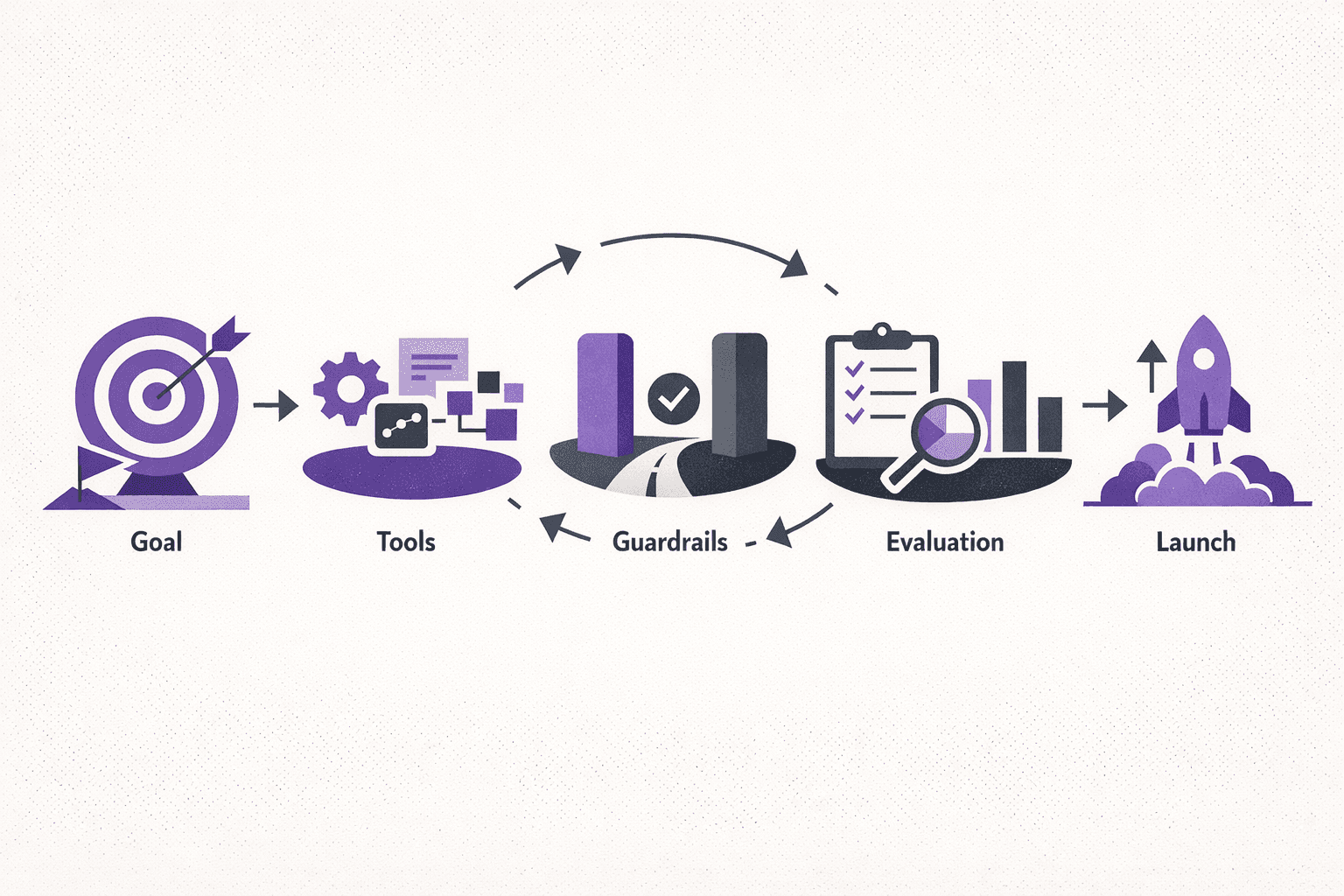

This guide is a practical framework for first production deployments. It covers problem selection, tool design, guardrails, evaluation, and rollout, with a focus on predictable operations rather than prototype novelty.

An AI agent is a system that takes a goal, plans steps, uses tools, and executes actions with persistent context. In production, that agent must also be measurable, controllable, and resilient under real-world constraints.

A production agent has five mandatory characteristics:

If any one of these is missing, it is a prototype, not a production agent.

Many teams jump to “agent” when the right solution is simpler. Use this decision matrix before writing any code.

| Need | Best fit | Why it works |

|---|---|---|

| Simple input → output response | Chatbot | No planning or tool use required |

| Repeatable steps with fixed logic | Workflow automation | Deterministic and cheap to run |

| Multi-step, variable path, tool use | AI agent | Planning + tool use adds flexibility |

| Multiple agents coordinating | Multi-agent system | Specialization improves accuracy at scale |

Rule of thumb: choose the simplest solution that still achieves the outcome. If a fixed workflow is sufficient, an agent adds unnecessary cost and risk.

Not all agents are built the same. Choose the pattern based on the complexity of the goal and the tools required.

Startups should begin with tool-use agents. They deliver the highest ROI with the lowest operational complexity.

A production agent needs a precise goal and measurable outcomes. Avoid vague goals like “improve onboarding.” Use explicit outcomes and measurable thresholds.

Good goal statements:

Define success metrics up front:

Scoping keeps the build small enough to ship and large enough to create value. The best first agent handles a narrow workflow end-to-end and includes only the tools required for that flow.

Scoping checklist:

Example scope (support agent):

Memory is the core advantage of agentic systems. It is also a major source of latency and cost. Define memory types before implementation.

Memory types to choose from:

Recommended approach for the first agent:

This approach limits context bloat and makes evaluation more deterministic.

Tool access is the difference between a demo and a production agent. It is also the biggest source of risk. Tooling must be explicit, scoped, and auditable.

Every tool needs a human-readable policy. If a tool cannot be explained in a sentence, it is too risky to expose.

Guardrails keep the agent safe, predictable, and cost-effective. They should be in place before performance optimizations or fine-tuning.

Example policy: “Any write action to billing data requires human approval and is limited to authorized roles.”

Evaluation is the most neglected part of AI agent development. Without it, success is subjective and regressions are invisible.

| Metric | Target | Notes |

|---|---|---|

| Task success rate | ≥ 90% | Measured on offline dataset |

| Factual accuracy | ≥ 95% | Verified against source data |

| Escalation rate | ≤ 10% | Human review only when necessary |

| Cost per task | ≤ $0.25 | Includes LLM + tool calls |

| Median latency | ≤ 8s | End-to-end completion |

Build this harness before launch. It becomes the baseline for every future change.

Every production agent will fail. The goal is to fail safely, transparently, and in a way that preserves user trust.

Failure categories to plan for:

Recommended escalation pattern:

These paths can be evaluated in the test harness and measured in production.

Framework choice affects speed, reliability, and future maintenance. Use this quick comparison to align with the startup stage.

Recommendation: for the first production agent, start with direct API usage and minimal abstractions. Complexity can be added once metrics are stable.

Costs vary by use case, but early estimates help with prioritization and fundraising. Use the following ranges for planning.

| Agent type | Typical scope | Cost range | Time range |

|---|---|---|---|

| Tool-use agent | 1 workflow + 2-3 tools | $10K–$25K | 2–4 weeks |

| RAG + agent | Retrieval + tool use | $20K–$45K | 3–6 weeks |

| Multi-agent | Orchestrator + 2-4 specialists | $35K–$75K | 5–8 weeks |

| Autonomous | Planner + executor + evaluation | $60K–$120K | 6–10 weeks |

Key drivers of cost include tool integration complexity, evaluation coverage, compliance requirements, and latency constraints.

Production readiness is not only about accuracy. It is also about how the agent is introduced to real users.

A controlled rollout protects the business and builds trust with users.

Each pitfall is avoidable with the framework above.

Security cannot be retrofitted after launch. Start with data boundaries and explicit policies.

Minimum requirements for most startups:

If the product touches regulated data (healthcare, finance, education), add compliance-specific constraints from day one.

This example shows a realistic timeline for a first tool-use agent in a startup environment. Timelines vary based on data access and tool readiness.

Week 1: Discovery and scoping

Week 2: Build core agent workflow

Week 3: Guardrails and reliability

Week 4: Launch readiness

This timeline keeps scope tight while still delivering production readiness.

Use this sequence for any first production agent:

Start with a concrete outcome and measurable success targets before selecting tools or models.

Choose the minimum viable pattern that can solve the workflow reliably, then scale complexity gradually.

Enforce least privilege, policy constraints, and approval checkpoints before enabling production actions.

Create offline and end-to-end test harnesses so regressions, latency, and cost drift are visible.

Use shadow mode, progressive exposure, and explicit human handoff paths to protect user trust.

Track accuracy, escalation rate, cost per task, and latency. Tune prompts and tools from real data.

This approach reduces risk, keeps costs predictable, and delivers a clear path to value.

Startups often benefit from external expertise when timelines are tight or the stack is complex. A partner can bring proven architectures, evaluation frameworks, and security practices.

Codse Tech delivers production-grade AI agent development with clear scope, fixed-scope sprints, and measurable outcomes. For next steps, review the AI agent development service page or explore AI integration services for broader product upgrades.

Scope, build, and launch production agents with tool use, guardrails, and measurable reliability.

Explore serviceEmbed AI into existing products with secure connectors, structured outputs, and evaluation harnesses.

Explore serviceAI agent development is the process of building systems that can plan tasks, use tools, and execute actions to achieve a goal. In production, it requires guardrails, evaluation, and controlled tool access.

Costs vary by complexity, but most startup-ready agents require a discovery phase, 2-6 weeks of build time, and ongoing evaluation. The largest cost drivers are tool integrations, evaluation coverage, and data security requirements.

A scoped, tool-use agent can be shipped in 2-4 weeks when the data and tooling are ready. More complex multi-agent or autonomous systems typically take longer due to evaluation requirements.

A chatbot responds to prompts. An AI agent plans steps, uses tools, and completes tasks. Agents require higher governance, evaluation, and cost controls than chatbots.

Direct API usage with tool calling is the most reliable path for a first production agent. Higher-level frameworks can be added later when metrics are stable and use cases expand.